Some features of the framework will not work, like some report Adapters, however, the PSO core is working really great. I've used the PyPSO subversion release 0.11. Since it's not finished yet, you can download from the subversion repository from here.

Here is the source code I've used to minimize the De Jong's Sphere function (Special thanks to Christian Perone who inspired me to do this work) :

"""

PyPsoS60Demo.py

Author: Marcel Pinheiro Caraciolo

caraciol@gmail.com

License: GPL3

"""

BLUE = 0x0000ff

RED = 0xff0000

BLACK = 0x000000

WHITE = 0xffffff

import e32, graphics, appuifw

print "Loading PyPSO modules... ",

e32.ao_yield()

from pypso import Particle1D

from pypso import GlobalTopology

from pypso import Pso

from pypso import Consts

img = None

coords = []

graphMode = False

canvas = None

w = h = 0

print " done !"

e32.ao_yield()

def handle_redraw(rect):

if img is not None:

canvas.blit(img)

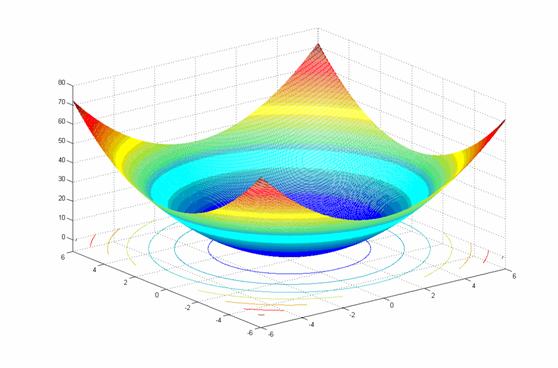

def sphere(particle):

total = 0.0

for value in particle.position:

total += (value ** 2.0)

return total

def pso_callback(pso_engine):

it = pso_engine.getCurrentStep()

best = pso_engine.bestParticle()

if graphMode:

img.clear(BLACK)

img.text((5, 15), u"Iteration %d - Best Fitness: %.2f" % (it,best.ownBestFitness), WHITE, font=('normal', 14, appuifw.STYLE_BOLD))

img.line((int(w/2),0,int(w/2),h),WHITE)

img.line((0,int(h/2),w,int(h/2)),WHITE)

cx = int(w/2) / int(best.getParam("rangePosmax"))

cy = int(-h/2) / int(best.getParam("rangePosmax"))

for particle in pso_engine.topology:

img.point([int(cx*particle.position[0]+ (w/2)),int(cy*particle.position[1]+(h/2)),5,5],BLUE,width=4)

img.point([int(cx*best.ownBestPosition[0]+ (w/2)),int(cy*best.ownBestPosition[1]+(h/2)),5,5],RED,width=4)

handle_redraw(())

else:

print "Iteration %d - Best Fitness: %.2f" % (it, best.ownBestFitness)

e32.ao_yield()

return False

def showBestParticle(best):

global graphMode

if graphMode:

img.clear(BLACK)

img.text((5, 15), u"Best particle fitness: %.2f" % best.ownBestFitness, WHITE, font=('normal', 14, appuifw.STYLE_BOLD))

img.line((int(w/2),0,int(w/2),h),WHITE)

img.line((0,int(h/2),w,int(h/2)),WHITE)

cx = int(w/2) / int(best.getParam("rangePosmax"))

cy = int(-h/2) / int(best.getParam("rangePosmax"))

img.point([int(cx*best.ownBestPosition[0]+ (w/2)),int(cy*best.ownBestPosition[1]+(h/2))],RED,width=7)

handle_redraw(())

e32.ao_sleep(4)

else:

print "\nBest particle fitness: %.2f" % (best.ownBestFitness,)

if __name__ == "__main__":

global graphMode, canvas, w, h

data = appuifw.query(u"Do you want to run on graphics mode ?", "query")

graphMode = data or False

if graphMode:

canvas = appuifw.Canvas(redraw_callback=handle_redraw)

appuifw.app.body = canvas

appuifw.app.screen = 'full'

appuifw.app.orientation = 'landscape'

w,h = canvas.size

img = graphics.Image.new((w,h))

img.clear(BLACK)

handle_redraw(())

e32.ao_yield()

#Parameters

dimmensions = 5

swarm_size = 30

timeSteps = 100

particleRep = Particle1D.Particle1D(dimmensions)

particleRep.setParams(rangePosmin=-5.12, rangePosmax=5.13, rangeVelmin=-5.12, rangeVelmax=5.13, bestFitness= 0.0 ,roundDecimal=2)

particleRep.evaluator.set(sphere)

topology = GlobalTopology.GlobalTopology(particleRep)

pso = Pso.SimplePSO(topology)

pso.setTimeSteps(timeSteps)

pso.setPsoType(Consts.psoType["CONSTRICTED"])

pso.terminationCriteria.set(Pso.FitnessScoreCriteria)

pso.stepCallback.set(pso_callback)

pso.setSwarmSize(swarm_size)

pso.execute()

best = pso.bestParticle()

showBestParticle(best)

To make things more interesting, i've decided to put a simple graphic mode, when the user can choose to see the simulation in graphics mode or console mode. So as soon as you start the PyPsoS60Demo.py script, a dialog will show up to ask which mode the user prefer to see the simulation. You can see the video of the graphics mode running below.

Each point is a particle and they're updating their position through the simulation and since the best optimal solution of the problem is the point (0,0), in the end of the simulation, the particles will be close to the best solution. You can see that the red point is the best particle of all swarm.

You can note at the source code the use of the module "e32" of the PyS60, this is used to process pending events, so we can follow the statistics of the current iteration while it evolves.

I hope you enjoyed this work, the next step is to port the Travelling Salesman Problem to cellphone!

You can download the script above here.

Marcel Pinheiro Caraciolo

![PythonBrasil[9]](http://2013.pythonbrasil.org.br/divulgue/no-seu-site/pythonbrasil9_en_halfbanner.gif)